By Xavier Casanova, CEO and Co-founder, Olakai (AI Fund portfolio company)

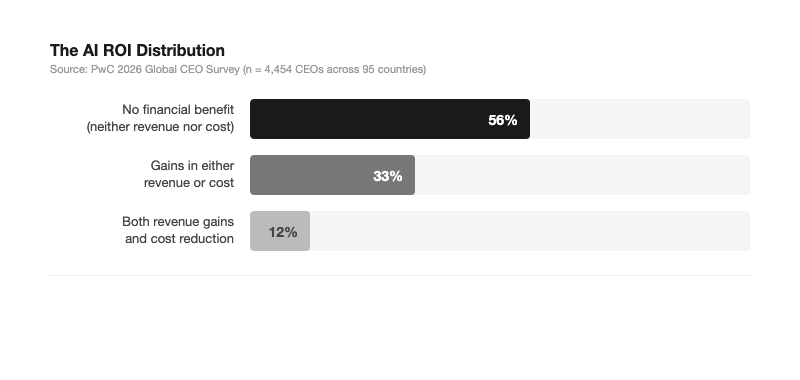

Enterprise AI spending hit $37 billion in 2025, up from $1.7 billion just three years earlier. It is the fastest-scaling software category in history. And yet, according to PwC’s 2026 Global CEO Survey of 4,454 chief executives across 95 countries, 56% report that AI has delivered no significant financial benefit. Neither increased revenue nor decreased costs.

Only 12% report achieving both.

The AI ROI Distribution

These numbers are not evidence that AI does not work. They are evidence that most companies have not built the infrastructure to know whether it works. The problem is not technology. It is measurement.

The Sequencing Problem

The most common pattern I see in enterprise AI deployments looks like this: a company buys AI tools, rolls them out to employees, tracks adoption metrics like active users and queries per day, and then six months later asks “what is the ROI?”

By that point, the question is almost unanswerable. Not because there is no value being created, but because nobody defined what value meant before they started. There is no baseline, no control, no attribution model. Usage went up. But usage is not value.

Anthropic’s Economic Index, published in January 2026, offers a useful framework here. Rather than tracking generic activity metrics, their approach measures what they call “economic primitives”: task complexity, the degree of autonomy given to the AI, and success rates. A user summarizing an email (low complexity, low autonomy) has a very different economic footprint than a user delegating a multi-step coding workflow (high complexity, high autonomy).

Most companies do not make this distinction. They count logins and call it adoption. Adoption is not the metric. Impact is the metric. And impact requires a measurement framework that most organizations have not built.

The Attribution Problem

Even companies that define success metrics up front face a second challenge: AI value is distributed, not contained.

When a sales team uses AI to draft outreach emails, an analyst uses it to build a financial model, and a support team uses it to triage tickets, the value shows up across a dozen P&L lines. No single dashboard captures it. No single team owns it. The CFO sees cost lines for AI licenses and infrastructure, but the revenue and efficiency impacts are scattered across the business.

This is fundamentally different from traditional software ROI. When you deploy a CRM, you can measure pipeline velocity and deal close rates. When you deploy an ERP, you can measure process cycle times. AI does not work like that. Its value accrues horizontally across workflows, and the measurement infrastructure for horizontal value does not exist in most companies.

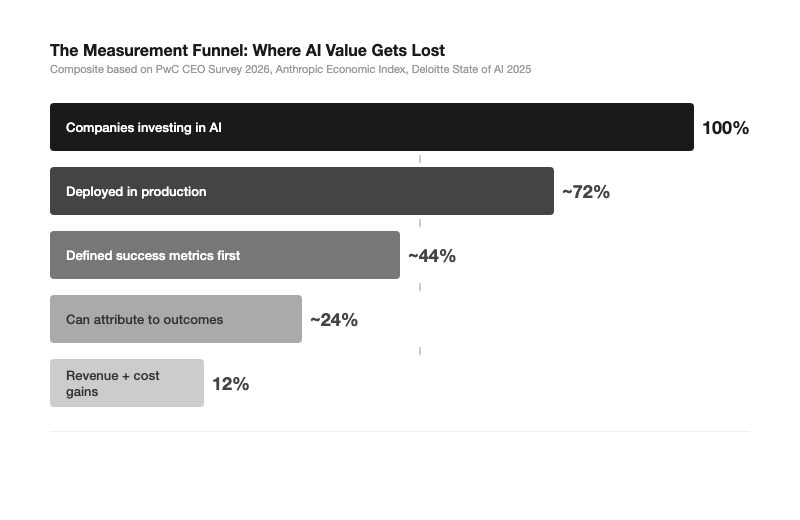

The Measurement Funnel

The drop-off at each stage of this funnel is not a technology failure. It is a measurement failure. Companies that cannot define, attribute, and prove AI value cannot capture it.

The Framework Problem

The third gap is conceptual. Most companies are measuring AI with frameworks designed for the wrong era.

The default metrics for AI ROI are cost savings and headcount reduction. These are the metrics boards understand, so they are the metrics teams report. But they miss the actual value AI creates. When an engineer ships a feature in two days instead of two weeks, the company did not save money on that engineer’s salary. It got more output from the same cost. When a legal team reviews contracts three times faster, the firm did not reduce headcount. It increased capacity without increasing spend.

These are real economic gains. But they are invisible to a measurement framework built around cost reduction. Companies end up in a situation where AI is genuinely making people more productive, but the metrics they report to the board cannot show it. The board sees cost and sees no savings. The team sees impact but cannot prove it.

The 12% of CEOs who report both revenue gains and cost reduction from AI are two to three times more likely to have embedded AI extensively across decision-making and demand generation. They did not just deploy tools. They instrumented the workflows. They built the connective tissue between AI activity and business outcomes.

What This Means for AI Strategy in 2026

The next wave of enterprise AI value will not come from better models. The models are already good enough for most business applications. It will come from better visibility into what AI is actually doing inside organizations.

Three shifts are required:

From adoption metrics to impact metrics. Active users and query counts tell you nothing about value. Companies need to measure what Anthropic calls the “work type mix”: what tasks are being handled by AI, at what complexity level, and with what outcomes. Google’s recent update exposing Gemini usage metrics in admin dashboards is an early move in this direction, but it is only the beginning.

From departmental measurement to cross-functional attribution. AI value does not live in one department’s budget. Companies need measurement infrastructure that can trace AI-assisted work across teams and connect it to business outcomes. This requires shared definitions of value, shared data, and most importantly, a single owner who is accountable for the result.

From cost-reduction framing to capability-expansion framing. The right question is not “did AI save us money?” It is “what can we do now that we could not do before, and what is that worth?” The companies that figure out how to measure capability expansion, rather than just cost compression, will compound their advantage over time.

The AI spending is not slowing down. The question is whether companies will build the measurement infrastructure to know what they are getting for it. The 12% already have. The other 88% are running one of the largest uncontrolled experiments in business history.

The experiment does not have to stay uncontrolled.

Xavier Casanova is CEO and Co-founder of Olakai, the leading enterprise AI analytics and governance platform, backed by AI Fund and Andrew Ng. A Silicon Valley entrepreneur for over 25 years, Xavier has founded multiple enterprise SaaS companies and writes about AI strategy and governance at The AI Boardroom on Substack.